A Treasury of Terminator Images For Stories About Autonomous Drones

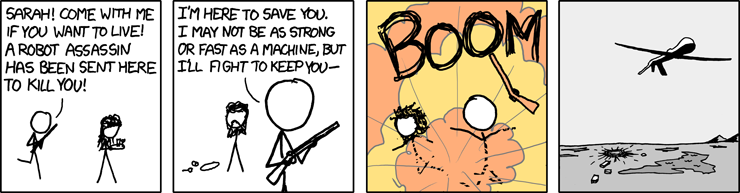

First off, I should point out that XKCD, the venerable stick people comic about math and science, has by far the best treatment of exactly what is so wrong (if fun) about the Terminator films:

The point being: actual robot assassins will look like drones do now: relatively downmarket, slow-moving, and pretty familiar. Fast-flying jet drones are not any good for what people worry about when it comes to drones: they cannot move low and slow over a target, meticulously collecting intelligence in order to build a targeting profile for strike.

There’s another bonus to drones working this way (and they will, for the vastly foreseeable future): the ones that carry weapons are big by necessity, easy to spot, and (especially for a national military) rather trivial to shoot down. That means that they really cannot fly places where they are unwelcome: when even super secret stealth drones have drifted into Iranian airspace from Afghanistan, for example, they’ve been grounded and captured for study.

So lost in all of the heated rhetoric about drones and drone strikes and the era of assassination is the very simple reality that drones only fly with consent — no one “forces” them on a country (not even Pakistan), and when they do go somewhere they are unwanted they are swatted from the sky.

So when people talk about “killer robots,” what they really mean are the usual drones we fly right now, only with better targeting packages and the ability to complete their missions should humans be cut out of the decision loop somehow. But that reality doesn’t really scare people very effectively so the activists trying to strangle autonomy research and remote flight technology in its infancy have come up with a much more effective scare tactic: the Terminator.

Granted, there is some variation on this theme, including a wonderful shoutout to RoboCop.

Even so (I mean, really? Ultron?), the point is clear: autonomous robots are basically evil incarnate, and there is no possible military use for them other than apocalypse.

Does it matter that the “experts” in question have either no experience at all with artificial intelligence systems (such as Stephen Hawking or Elon Musk) or no experience at all with military policy (most of the computer scientists)? No.

These images are used for a very specific editorial purpose: not to accurately represent the potential — both beneficial and malign — of increased automation on certain weapons platforms, but to predispose readers to immediately associate any advancement with, essentially, the destruction of humankind.

Granted! Some people think “strong,” or “general” AI (that is, a piece of software that can think and innovate on its own, and essentially self-program) is inherently destructive to humanity. But that is far from a given, and the very idea of a destructive singularity is based almost entirely on science fiction like the Matrix — much like with the Terminator, it is great as entertainment but terrible as an analytic predictor of the future.

Something like the Matrix is stupid, from a machine perspective. So, too, is the Terminator. The human biped form is one of the least efficient for stealthily or efficiently targeting people to kill them in warfare. And in those stories, the ways in which they decided to wipe out humanity is dumb as well — for the machines, they would essentially kill themselves, either from starving themselves of their own power supply or by frying themselves from the electromagnetic pulses that result from global thermonuclear war.

But what makes this use of imagery even worse, from an editorial perspective, is how it is not just a silly bit of emotional manipulation. It is actively deceitful:

Put simply: the terminator is not “feasible within years, not decades.” And while drones are easy to build, they’re also easy to kill — so the nuclear weapons concerns about proliferation and danger are almost entirely hot air. The boring, reassuring reality is that humanoid robots are either ponderously slow, or they are so unstable that they are useless outside of confined settings (like a boat or collapsed building) where they could serve a search-and-rescue function. Path detection and image recognition among other robots is similarly rudimentary: self-driving cars function, in part, because they don’t need to recognize individuals, only the outline of a person and the outline of a car. And heaven help you if a humanoid robot were to aim a gun from its hand. There’s no point! The idea that a modern military, either in the West or in Russia or China, would build a deadly robot that can so poorly sense the world about itself that it would kill its own side is just as science fictional as a terminator: fodder for an entertaining action film but so unlikely as to be laughable.

But the singularity, you say! Ultron was strong AI, and we might be swallowed up or subsumed by our own inventions! Well sure, we should consider whether the singularity — the point at which computer systems outside of human control shape and determine our fate — is already upon us. But there is a great reason to think it is: high-frequency stock trading already controls global financial markets; our shopping habits and the marketing we are exposed to and the movies we watch and like… all are determined entirely by computer programs; and so on. More to the point, all of us have become reliant on our phones, which enable us to “off-load” our memories, schedules, contacts, and knowledge into an easily queried format. We interact via devices, select mates through computers, and rely on computers to get our cars safely (or not) from point A to point B.

Even in the military the filtering, selection, and interpretation of information is so automated it is unlikely anyone could even trace, from beginning to end, exactly where there was meaningful human input in identifying and acting upon information before the point at which it became inevitable. U.S. Navy vessels have automated machine guns that track and fire at incoming anti-ship missiles without human input; U.S. Army bases are guarded by “defensive” weapons that automatically track and return fire on suspected mortar emplacements; high-flying blimps automatically assemble targeting packages and threat tracking for the surrounding countryside; soon, eye pieces will search a wide range of radiation spectra to tell the soldier who is a threat and who is not. These processes are, in any meaningful sense, already detached from human judgment — we trust that the inputs our gadgets and sensors and software give to soldiers and commanders are accurate and capable of being acted upon.

But even if the singularity remains distant, who cares? Far from a terminator-like image, autonomous weapons will be mundane — it’s just automating yet another process at the end of a very long series of automated processes. And just like with other processes in a bureaucracy (the military is a bureaucracy, no matter how much people try to portray it as something more malign), there are controls, norms, operating procedures, and laws governing how it will or should be used. There are serious issues that need to be worked out before anyone will accept a fully autonomous machine, including whether it could ever even function.

Unlike nuclear weapons, autonomous weapons are still theory; people can theorize what they might look like (I have my own, boring idea), or they might be stuck in fantasy and think Austrian bodybuilder skeletons will march over their skulls shooting fricking laser beams out of their hands. I mean, the great thing about an unsupportable, unproven theory is that you can project whatever you want onto it: your hopes, fears, assumptions, and red lines.

The proliferation of terminator imagery is really just the proliferation of luddism in the public sphere: robots are scary. Even if it’s true people are scary too (we nuked entire cities before there were robots to worry about), because robots are on the wrong end of the uncanny valley, so clearly mechanical and foreign, they invoke a special form of revulsion. It is that visceral revulsion that these images reflect — not any grounded concern or even opposition to the development of advanced software. Just, animal fear. An “ick” reaction, one that is currently overpowering the discourse and silencing considerate discussion of the issue.

Maybe in a few years the fog will clear, and we can have a proper public debate about what this all means.